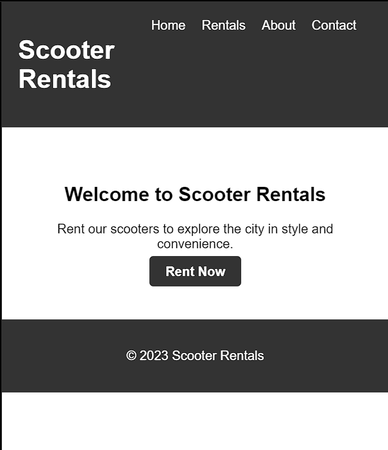

The generated code included a header with a navigation bar, a main section with a welcome message and a call-to-action button, and a footer with a copyright notice. The styling was simple and clean, but professional-looking. Of course, I had to tweak some of the code to fit my specific needs, but overall it was a great starting point.

The generated CSS code also included some basic rules for the layout and form elements, but there was no custom design or attention to detail. Overall, the website looked very generic and lacked any personality or brand identity.

I first tested Chat GPT 4 by asking it to write the code for the problem. To my surprise, the model generated correct and clean code in just a few minutes. It was quite impressive to see the model automatically write code for such a problem.

Next, I tested Google Bard with the same question, and I got the same result. However, when I tried to directly copy-paste the code generated by Bard, it did not work because It had initialized a variable incorrectly. After fixing the error, the code worked as expected.

I was impressed with both models' performance, but I wanted to understand the code better. So, I asked both models to explain their code, and to my surprise, both were able to explain the code quite well.

Bing AI, on the other hand, went beyond just generating code. It was able to generate images and fetch data from the internet. It was able to answer my questions more efficiently than the other models.

On the other hand, Google Bard AI appeared to be unremarkable and uninteresting in comparison.

However, it's worth noting that Google Bard is a relatively new AI language model, and we cannot judge it by its early appearance. As the model continues to learn and improve, it's possible that it could surpass Chat GPT 4 and even Bing AI in the future.

Overall, it was interesting to see how far AI language models have come and how they can be useful in various fields. I look forward to seeing what the future holds for AI language models and how they will continue to improve our lives.

Author

-Anurag

RSS Feed

RSS Feed